Roger Clarke's Web-Site

© Xamax Consultancy Pty Ltd, 1995-2024

Infrastructure

& Privacy

Matilda

Roger Clarke's Web-Site© Xamax Consultancy Pty Ltd, 1995-2024 |

|

|||||

| HOME | eBusiness |

Information Infrastructure |

Dataveillance & Privacy |

Identity Matters | Other Topics | |

| What's New |

Waltzing Matilda | Advanced Site-Search | ||||

Version of 2 June 2024

© Xamax Consultancy Pty Ltd, 2023-24

Available under an AEShareNet ![]() licence or a Creative

Commons

licence or a Creative

Commons  licence.

licence.

This document is at http://rogerclarke.com/ID/GT-IdEM.html

An early version of the theory in sections 3-6 was presented at ACIS'23, Wellington, 5-8 December 2023, with a supporting slide-set

The term Authorization refers to a key element within the process whereby control is exercised over access to resources using information and communications technologies. It involves assigning a set of permissions or privileges to particular users or categories of users. The term Access Control refers to a further element, which provides users with the capability to exercise those permissions. The administration of data about users is the subject of a family of business processes, collectively referred to as Identity Management (IdM). The conventional approaches to IdM, despite a quarter-century of experience and refinements, are still far from satisfactory, with widespread abuse by both authorized users and imposters. Additional challenges have arisen as artefacts are increasingly being provided with the means to act with varying degrees of autonomy directly on real-world things. This article builds on a previously-published pragmatic metatheoretic model to develop and present a generic theory whose scope, and some of whose particulars, overcome key limitations of currently mainstream approaches. The generic theory is then applied to identify weaknesses in current practice, and show how the theory enables the conception, design and implementation of more effective products and services to manage entities and identities.

Information and Communications Technology (ICT) facilities have become central to the activities not only of organisations, but also of communities, groups and individuals. The end-points of networks are pervasive, and so is dependence on the resources that are accessed be means of ICT facilities. Information Systems (IS) applying ICT long ago moved beyond the processing of data and its use for the production of information. Support for inferencing has become progressively more sophisticated, more decision-making is being automated, and delegation to artefacts of autonomous action in the real world is increasing. Humanity's growing reliance on machine-readable data, and on computer-based data processing, inferencing, decision and action, is giving rise to a high degree of vulnerability and fragility, because of the scope for misuse, interference, compromise and appropriation. Accordingly, a critical need exists for effective management of access to ICT-based facilities.

Conventional approaches within the ICT industry have emerged and matured over the last half-century. Terms in common usage include identity management (IdM), identification, authentication, authorization and access control. The adequacy of contemporary techniques has been in considerable doubt throughout the first two decades of the current century. A pandemic of data breaches has spawned notification obligations in many jurisdictions since the first Security Breach Notification Law was enacted in California in 2003 (Karyda & Mitrou 2016). The resources of many organisations have proven to be highly susceptible to unauthorised access, including some of the most strongly motivated and resourced to maintain high standards (ITG 2023). The topic area continues to attract attention in the ICT security literature. Recent articles are, however, primarily concerned with specific issues or contexts, and only secondarily with conceptual or technical weaknesseses in products and services, or empirical evidence of their effectiveness. For example, Pritee et al. (2024) and Aboukadri (2024) report on the use of machine learning (ML) techniques to incorporate empirically-based assessment of user behaviour into various phases of IdM. Other articles have had their focus on specific areas of vulnerability, such as 'privileged accounts' (Sindiren & Ciylan 2019) and 'delegated authorization' in health contexts (Zhao & Su 2024).

I contend that the many vulnerabilities in contemporary IdM arise from inadequacies in the conventional conception of the problem-domain, and in the models underlying architectural, infrastructural and procedural designs to support authorization and access control. My motivation in conducting the research reported here has been to contribute to improved IS practice and practice-oriented IS research. The IS notion is not interpreted in the narrowly technological sense evident in, for example, ISO/IEC 27001 2018: "[a] set of applications, services, information technology assets, or other information-handling components" (p. 5). Most IS have significant human elements, and making sense of an IS accordingly demands the adoption of a socio-technical perspective.

The method adopted in this article is to further develop a previously-published pragmatic metatheoretic model by extending it into the authentication and authorization spaces, to deliver a generic model of what is referred to in this article as Id/Entity Management (Id/EM), and to demonstrate the model's efficacy both in clarifying weaknesses in conventional IdM and in showing how to address them. The final step in the Id/EM process is access control, whose function of to enable the exercise of permissions by, but only by, authorized users. The access control step is dependent on an earlier authorization step, which establishes the permissions. Id/EM refers to the combination of architecture, infrastructure and process. It involves several additional steps necessary to support authorization and access control.

The article commences by reviewing the context and nature of authorization and access control, within their broader context of conventional IdM. This culminates in initial observations on issues that are relevant to the vulnerability to unauthorised access. An outline is then provided of a pragmatic metatheoretic model (PMM), highlighting the aspects of relevance to the analysis. Generic theories of authentication (GTA) and authorization (GTAz) are summarised, reflecting the insights of the PMM meta-model. This lays the foundations for proposals for adaptations to IS theory and practice in all aspects of identity management, including identification and authentication, but with particular emphasis placed on authorization and access control. The resulting Id/Entity Management (Id/EM) theory is then used as a lens through which weaknesses in conventional theory and practice in the area can be articulated, and means of addressing the weaknesses proposed.

This preliminary section provides a brief overview of conventional IdM concepts and processes. This is necessary to lay the foundation for an understanding of the differences that arise from the theory advanced later in the article.

A dictionary definition of authorization is "The action of authorizing a person or thing ..." (OED 1, first part); and authorize means "To give official permission for or formal approval to (an action, undertaking, etc.); to approve, sanction" (OED 3a) or "To give (a person or agent) legal or formal authority (to do something); to give formal permission to; to empower" (OED 3b). OED also recognises uses of 'authorization' to refer to the result of an authorization process: " ... formal permission or approval; an instance of this" (OED 1, second part). Overloading a term within a body of technical terminology creates unnecessary linguistic confusion; hence separate terms are preferable.

The remainder of this section draws on industry Standards to outline conventional usage of the term within the ICT industry, clarifies several aspects of the standards, summarises the various approaches in current use, and highlights aspects that need further articulation.

The authorization notion was first applied to ICT and and IS in the 1960s. It has subsequently developed considerably, both deepening and passing through multiple phases. It has mostly been treated as being synonymous with the selective restriction of access by some entity to a resource, an idea usefully referred to as 'access control'. Originally, the resource being accessed was conceived as a physical space, such as enclosed land, a building or a room. In the context of ICT, however, the focus has been on categories of IS resources, such as services, data, software, and devices, both local or remote.

The following quotations and paraphrases provide representative, short statements about the nature of the concept as it has been practised in ICT during the period c.1970 to 2020:

Authorization is a process for granting approval to a system entity to access a system resource (IETF 2007, at 1b(I), p. 29)

Access control is granting or denying an operation to be performed on a resource (ISO/IEC 27001 2018, p. 1, ISO/IEC 29146 2024)

Access control or authorization ... is the decision to permit or deny a subject access to system objects (network, data, application, service, etc.) ... The terms access control and authorization are used synonymously throughout this document (NIST800-162 2014, p. 2)

NIST's uses of access control and authorization as synonyms for one another is problematic. Josang (2017, pp. 135-142) draws attention to ambiguities in the mainstream definitions in all of the ISO/IEC 27000 series, the X.800 Security Architecture, and the NIST Guide to Attribute Based Access Control (ABAC). To overcome the problems, Josang distinguishes between:

Josang's approach is consistent with, but clearer than, that of a leading textbook, which uses 'access control' at times to refer to a suite of processes, but then narrows its application to the operational aspects of limiting access to the entities that have been authorized to have access, and 'authorization' as the preparatory step of deciding who or what has what permissions:

Authorization: [ The function of determining the grant ] of a right or permission to a system entity to access a system resource. This function determines who is trusted for a given purpose (Stallings & Brown 2015, p. 116)

Access control: A collection of mechanisms that work together to create a security architecture to protect the assets of the information system. ... (Stallings & Brown 2015, p. 5) [ cf. Access management? ]

Access Control: [ The limitation of ] information system access to authorized users, processes acting on behalf of authorized users, or devices (including other information systems) and to the types of transactions and functions that authorized users are permitted to exercise ... We can view access control as the central element of computer security ... (Stallings & Brown 2015, pp. 26, 114)

In relation to the notion of what it is that a user is entitled to do, many terms are used in somewhat inconsistent and overlapping ways. In particular, IETF uses '[an] authorization'; NIST uses 'privilege' and '[an] authorization'; and ISO uses four terms as synonyms: "privilege [aka] access right [aka] permission mean [an] authorization to a subject to access a resource" (ISO/IEC 29146 2024). In this article, to overcome the resulting confusion, the term Permission is used to refer to a declaration of allowed Actions on a particular IS Resource by a particular Actor. This approach is consistent with the approach of the leading textbook quoted above:

Permission: An approval of a particular mode of access to one or more objects. Equivalent terms are access right, privilege, and authorization (Stallings & Brown 2015, p. 130)

In Table 1, correspondences are indicated among the terms used in important standards documents and in the theory proposed in this article. In standards documents, the terms 'subject' and 'system resource / object' are intentionally generic. NIST800-162 (2014, p. 3) refers to an 'object' as "an entity to be protected from unauthorized use" . Examples of IS resources referred to in that document include "a file" (p. vii), "network, data, application, service" (p. 2), "devices, files, records, tables, processes, programs, networks, or domains containing or receiving information; ... anything upon which an operation may be performed by a subject including data, applications, services, devices, and networks" (p. 7), "documents" (p. 9), and "operating systems, applications, data services, and database management systems" (p. 20).

Authorization processes depend on reliable information. Identity Management (IdM) and Identity and Access Management (IAM) are ICT-industry terms for frameworks comprising architecture, infrastructure and processes that enable the management of user identification, authentication, authorization and access control processes. IdM was an active area of development c.2000-05. It has been the subject of a considerable amount of standardisation, in particular in the ISO/IEC 24760 series (originally of 2011, completed by 2019). A definition provided by the Gartner consultancy is:

Identity management ... concerns the governance and administration of a unique digital representation of a user, including all associated attributes and entitlements (Gartner, extracted 29 Mar 2023, emphasis added)

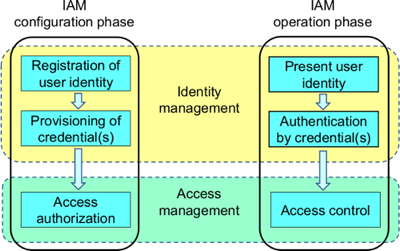

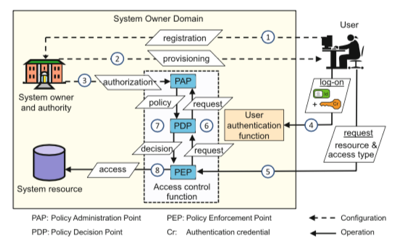

Figure 1 shows the process flow specified in NIST-800-63-3 (2017, p. 10). This is insufficiently precise to ensure effective and consistent application, because it contains both entity-types and states that they pass through, and shows relationships rather than processes. Josang (2017, p. 137, Fig. 1) provides a better-articulated overview, reproduced in Figure 2. This distinguishes the configuration (or establishment) phase from the operational activities of each of Identification, Authentication and Access. This is complemented by one particular mainstream scenario (Josang 2017, p. 143, Fig. 2) that illustrates a practical application of the concepts, and is reproduced in Figure 3.

Extracted from NIST-800-63-3 2017 (p. 10)

Extracted from

https://en.wikipedia.org/wiki/File:Fig-IAM-phases.png

See

also Josang (2017, p. 137), Fig. 1

Extracted from Josang (2017, p. 143), Fig. 2

The IdM industry long had a fixation on public key encryption. This grew out of single-signon facilities for multiple services within a single organisation, with the approach then being generalised to serve the needs of multiple organisations. The inadequacies of monolithic schemes gave way to federation across diverse schemes that specify common message standards and transmission protocols. Multiple alternative approaches are adopted on the supply side (Josang & Pope 2005). These are complemented and challenged by approaches on the demand-side that contest the dominance of the interests of corporations and government agencies and seek to protect the interests of users. These approaches include user-selected intermediaries, own-device as identity manager, and nymity services (Clarke 2004, Pfitzmann & Hansen 2006) and the more recent notion of Self Sovereign Identity (SSI) (Mühle et al. 2018).

The explosion in user-devices (desktops from c.1980, laptops from c.1990, mobile-phones from 2007 and tablets from 2010) has resulted in the present context in which some forms of IdM involve two separate but interacting processes, as in the FIDO Alliance specifications (FIDO 2022). Individuals authenticate locally to their personal device using any of several techniques designed for that purpose; and the device authenticates itself to the targeted service(s) through a federated, cryptography-based scheme. Conventional IdM models are revisited later in this article, when they are reconsidered in light of the theory proposed in the following sections.

Whether a request is granted or denied is determined by an authority. In doing so, the authority applies decision criteria. From the 1960s onwards, a concept of Mandatory Access Control (MAC) has existed, originating within the US Department of Defense. Instances of data are assigned a security-level, and each user is assigned a security-clearance-level. Processes are put in place whose purposes are to enable user access to data for which they have a requisite clearance-level, and to disable access to all other data. The security-level notion may be effective for documents with national security sensitivities, but not for the many different kinds of data handled by IS. In the IdM arena, the models in current use fall into a number of families, dfferentiated on the basis of the primary focus of the decision criteria. The acronyms for particular models of Access Control are listed for each alternative, and key features of each model are outlined below:

An early approach to access control was called Discretionary Access Control (DAC). It restricts access to IS Resources based on the identity of users who are trying to access them (although it may also provide each user with the power, or 'discretion', to delegate access to others). DAC matured into Identity Based Access Control (IBAC), which employs mechanisms such as access control lists (ACLs) to manage the Actors' Permissions to access the IS Resources. The Authorization process assumes that the identity of each Actor has been authenticated.

IBAC is effective in many circumstances, and continues to be used. It scales poorly, however, and large organisations have sought greater efficiency in managing access. From 1992-96 onwards, Role Based Access Control (RBAC), became mainstream in large systems (Pernul 1995, Sandhu et al. 1996, Lupu & Sloman 1997, ANSI 2012). In such schemes, an Actor is assigned to a Role, and each Role has access to specified IS Resources. This offers efficiency where significant numbers of individuals perform essentially the same functions, whether all at once or over a period of time. Application of RBAC in the highly complex setting of health data is described by Blobel (2004). See also ISO 22600 Parts 1 and 2 (2014). Blobel provides examples of roles, including (p. 254):

Two significant weaknesses of RBAC are that Role is a construct and lacks the granularity needed in some contexts, and that environmental factors are excluded. To address these weaknesses, Attribute Based Access Control (ABAC) has emerged since c.2000 (Li et al. 2002): "ABAC ... controls access to [IS Resources] by evaluating rules against the attributes of entities ([Actor] and [IS Resource]), operations, and the environment relevant to a request" (NIST-800-162 2014, p. vii). "Attribute-based access control (ABAC) [makes] it possible to overcome limitations of traditional role-based and discretionary access controls" (Schlaeger et al. 2007, p. 814). This includes the capacity to be used with or without the requestor's identity being disclosed, by using an "opaque user identifier" (p. 823).

NIST800-162 (2014) provides, as examples of Attributes of an Actor, "name, unique identifier, role, clearance" (p. 11), all of which relate to human users, and "current tasking, physical location, and the device from which a request is sent" (p. 23), which are environmental factors. Examples of IS Resource attributes given include "document ... title, an author, a date of creation, and a date of last edit, ... owning organization, intellectual property characteristics, export control classification, or security classification" (p. 9). Examples of environmental conditions include "current date, time, [actor/IS resource] location, threat [level], and system status" (pp. 24, 29).

Industry standards and protocols exist, to support implementation of authorization processes, and to enable interoperability among organisationally and geographically distributed elements of information infrastructure. Two primary examples are OASIS SAML (Security Assertion Markup Language), a syntax specification for Assertions about an Actor, supporting the Authentication of Identity and Attribute Assertions, and Authorization; and OASIS XACML (eXtensible Access Control Markup Language), which provides support for Authorization processes at a deeper level of granularity.

Beyond ABAC, an even more finely grained approach is adopted by Task-Based Access Control (TBAC). This associates Permissions with a Task, e.g. in a government agency that administers welfare payments, with a case-identifier; and in an incident management system, with an incident-report identifier; in each instance combined with some trigger such as a request by the person to whom the data relates (the 'data-subject'), or a Task allocation to an individual staff-member by a workflow algorithm. See (Thomas & Sandhu 1997, Fischer-Huebner 2001, p. 160). To date, however, TBAC appears to have achieved limited adoption.

The present article treats the following aspects as being for the most part out-of-scope:

However, the following two aspects are relevant to the analysis that follows.

The above broad description of the conventional approach to Authorization adopts an open interpretation of the IS Resource in respect of which an Actor is granted Permissions. In respect of processes, a Permission might apply to all available functions, or each function (e.g. view data, create data, amend data, delete data) may be the subject of a separate permission.

In respect of Data, a hierarchy exists. For example, a structured database may contain data-files, each of which contains data-records, each of which contains data-items. The Unix file-system, for example, distinguishes separate functions at the file-level (read, write and execute), with write encompassing all of create, amend, delete and rename. A Permission may apply to all Data-Records in a Data-File, but it may apply to only some, based on criteria such as a Record-Identifier, or the content of individual Data-Items. Hence, visualising a Data-File as a table, a Permission may exclude some rows (Records) and/or some columns (Data-Items). There is a modest literature on the granularity of data-access permissions, e.g. Karjoth et al. (2002), Zhong et al. (2011).

The Authorization process assumes the existence of an Authority that can and does make decisions about whether to grant Actors specific Permissions in relation to particular IS Resources. In many cases, the Authority is simply assumed to be the operator that manages the relevant data-holdings and/or exercises Access Control over those data-holdings. In other cases, the operation may be outsourced to an agent but the decisions performed or at least supervised by the principal.

Contexts exist, however, in which the Authority is some other party entirely. One example is a regulatory agency. Another example is the entity to which the data relates. This is the case in schemes that include an opt-out facility at the option of the person that the data relates to (often referred to as the data subject), and schemes that are consent-based (also referred to as opt-in). Another recent example is the 'consumer data rights' schemes introduced into financial services sectors in some countries. In all of these cases, the Authority with respect to each Record in the data-holdings is the data subject, and the system operator implements the criteria set by that person. Although such patterns are most common in the case of human entities, they also arise with organisational entities.

An important example is in health-care settings, where all personal data is sensitive, and some, such as that relating to mental health, sexually-transmitted diseases and genetic material, is highly sensitive. A generic model is described in Clarke (2002b, at 6.) and Coiera & Clarke (2004). In the simplest case, each individual has a choice between the following two criteria:

Further articulation might provide each individual with a choice between the following two criteria:

Each specific denial or consent is expressed in terms of specific attributes, which may define:

A fully articulated model supports a nested sequence of consent-denial or denial-consent pairs ('Yes/No, except ... unless ...'). These more complex alternatives enable a patient to have confidence that some categories of their health data are subject to access by a very limited set of treatment professionals, and in quite specific circumstances. Schemes of this kind are reviewed in Iyer et al. (2021), who investigate the expressiveness of negated conditions (e.g. not on leave) compared with negative authorizations (default denial unless one of a set of authorizing rules applies). Conventional Authorization models, however, are generally unable to support consent as an Authorization decision criterion.

The next section outlines a meta-model that has been devised to support IS practice and practice-oriented IS research. The subsequent sections extend this meta-model to express Generic Theories of Authentication (GTA) and of Authorization (GTAz).

(Clarke 2021, 2022, 2023a, 2023b), proposes a model that it is argued reflects the viewpoint adopted by IS practitioners, and that is designed to support understanding of and improvements to IS practice and practice-oriented IS research. The model embodies the socio-technical system view, whereby organisations are recognised as comprising people using technology, each affecting the other, with effective IS design depending on the integration of the two. The model is 'pragmatic', as that term is used in philosophy -- that is, it is concerned with understanding and action, rather than merely with describing and representing. It is also 'metatheoretic' (Myers 2018, Cuellar 2020), because it builds on a set of ontological, epistemological and axiological assumptions. Care is taken in the choice of terms, their clear definition, and their interweaving into a comprehensive, coherent and internally consistent terminology. This section provides a brief overview of the meta-model. Defined terms are highlighted using Capitals and Italics. All definitions are provided in Figures, and consolidated in an associated Glossary (Clarke 2024a).

As depicted in Figure 4, the Pragmatic Metatheoretical Model (PMM) distinguishes a Real World from an Abstract World. The Real World comprises Phenomena of two kinds, Things and Events, each of which have Properties. These can be sensed by humans and artefacts with varying reliability. Abstract Worlds are depicted as being created at two levels. The Conceptual Model level reflects the modeller's perception of Real World Phenomena. At this level, the notions of Entity and Identity correspond to the category Things, and Transaction to the category Events. Figure 5A provides key definitions

After Clarke (2021)

A vital aspect of this meta-model is the distinction between Entity and Identity. An Entity corresponds with a Physical Thing. An Identity, on the other hand, corresponds to a Virtual Thing. This a somewhat novel proposal. A Virtual Thing is a particular presentation of a Physical Thing, most commonly when it performs a particular Role -- i.e., adopts a particular pattern of behaviour. For example, the NIST (2006) definition of authentication distinguishes a "device" (in the terms of this model, an artefactual Entity) from a "process" (an artefactual Identity), and the Gartner definition of IdM refers to "a digital representation [i.e. a human Identity] of a user [i.e. a human Entity]". An Id/Entity-Instance is a particular occurrence of an Id/Entity. An Entity-Instance may adopt one Identity-Instance in respect of each role it performs, or it may use the same Identity-Instance when performing multiple and even all roles. Conversely, an Identity-Instance may be assumed by multiple Entities at the same time, and/or over time. For example, within a corporation, different human Entity-Instances succeed one another in adopting the Identity CEO, whereas the Identity Company Director is adopted by multiple human Entity-Instances not only successively, but also at the same time. For simplicity of expression, the remainder of this article uses Id/Entity for both the generic concepts and instances of them.

The Data Model Level operationalises the relatively abstract ideas in the Conceptual Model level. This moves beyond a design framework to fit with data-modelling and data management techniques and tools, and to enable specific operations to be performed to support organised activity. The PMM uses the term Information specifically for a sub-set of Data: that Data that has value (Davis 1974, p. 32, Clarke 1992b, Weber 1997, p. 59). Data has value in only very specific circumstances. Until it is in an appropriate context, Data is not Information, and once it ceases to be in such a context, Data ceases to be Information. Assertions are putative expressions of knowledge about one of more elements of the metatheoretic model. Figure 5A provides definitions of key terms.

A further notion that assists in understanding models of human beings is the Digital Persona. This means, conceptually, a model of an Id/Entity's public personality based on Data and maintained by Transactions, and intended for use as a proxy for the Id/Entity Operationally, a Digital Person is a Data-Record that is sufficiently rich to provide the record-holder with an adequate image of the represented Id/Entity. A Digital Persona may be Projected by the Id/Entity using it, or Imposed by some other party, such as an employer, a marketing corporation, or a government agency (Clarke 1994a, 2014). As the term 'identity' is used in conventional IdM, to refer to "a unique digital representation of a user", it is an Imposed Digital Persona. Many organisations have become highly dependent on the particular Digital Persona they have developed for each party that they deal with. The data is typically drawn from many sources, each with at best moderate data quality, and which together evidence varying and often inadequate inter-source compatibility (Clarke 2019).

The concepts in the preceding paragraphs declare the model's ontological and epistemological assumptions. A third relevant branch of philosophy is axiology, which deals with 'values'. The values in question are those of both the system sponsor and stakeholders. The stakeholders include human participants in the particular IS ('users'), but also those people who are affected even though they are not themselves participants. These are referred to in some academic fields as 'usees' (Berleur & Drumm 1991 p. 388, Clarke 1992a, Fischer-Huebner & Lindskog 2001, Baumer 2015). The interests of users and usees are commonly in at least some degree of competition with those of social and economic collectives (groups, communities and societies of people), of the system sponsor, and of various categories of formalised organisations. Generally, the interests of the most powerful of those players dominate.

Further developed from Clarke (2021)

The basic PMM was extended in Clarke (2022), by refining the Data Model notion of Record-Key to distinguish two further concepts: Identifiers as Record-Keys for Identity-Records (corresponding to Virtual Things in the Real World), and Entifiers as Record-Keys for Entities (corresponding to Physical Things).

A computer is an artefactual Entity, for which a Processor-ID may exist, failing which its Entifier may be a proxy, such as the Network Interface Card Identifier (NIC ID) of, say, an installed Ethernet or Wifi card, or its IP-Address. A process is an artefactual Identity, for which a suitable Identifier is a Process-ID, or a proxy such as its IP-Address concatenated with its Port-Number.

For human Entities, the primary form of Entifier is a biometric, although the Processor-ID of an embedded chip is another possibility (Clarke 1994b p. 31, Michael & Michael 2014). For users (whether a human or an artefact), a UserID or LoginID is a widely-used proxy Identifier.

This leads to distinctions between Identification processes, which involve the provision or acquisition of an Identifier, and Entification processes, for which an Entifier is needed. In circumstances in which a reference may be to either an Identifier of an Identity, or an Entifier of an Entity, the short term Id/Entifier is used. The acquired Id/Entifier can then be used as the Record-Key for a new Data-Record, or as the means whereby the Id/Entity can be associated with a particular, already-existing Id/Entity-Record. The terms Entifier and Entification are uncommon, but have been used by the author in refereed literature since Clarke (2002a) and applied in over 30 articles within the Google Scholar catchment, which together have over 500 citations. Key terms are defined in Figure 5B.

Further developed from Clarke (2022)

Two further papers extend the PMM in relation to Authentication. Clarke (2023b) argues that the concept needs to encompass Assertions of all kinds, rather than just Assertions involving Id/Entity. That article presents a Generic Theory of Authentication (GTA), defining it as a process that establishes a degree of confidence in the reliability of an Assertion, based on Evidence. The GTA distinguishes various categories of Assertion that may or may not involve Id/Entity, including Assertions of Fact, Content Integrity, and Value. An item of Evidence is referred to as an Authenticator. A Credential is a category of Authenticator that carries the imprimatur of some form of Credential Authority. A Token is a recording medium on which useful Data is stored. Examples of 'useful Data' in the current context include Id/Entifiers, Authenticators and Credentials.

The logically second of the two papers, (Clarke 2023a), defines an Id/Entity Assertion as a claim that a particular Virtual Thing or Physical Thing is appropriately associated with one or more Id/Entity-Records. An Id/Entity Assertion is subjected to Id/Entity Authentication processes, to establish the reliability of the claim. Generally, 'appropriately associated' means that the Thing is the or a Thing that commonly uses a particular Identifier (e.g. a personal name), or that has been assigned a particular Identifier to use in such circumstances (e.g. a customer-code or a UserID).

Also of relevance is the concept of a Property Assertion, whereby a particular Data-Item-Value in a particular Id/Entity Record is claimed to be appropriately associated with, and to reliably represent, a particular Property of a particular Virtual Thing or Physical Thing.

Real-World Properties, and Abstract-World Id/Entity Attributes, represented by Data-Items, are of many kinds. A category of particular importance in commercial transactions is a Principal-Agent Relationship Assertion, whereby a claim is made that a particular Virtual Thing or Physical Thing has the Property of authority to perform an Action on behalf of another particular Thing. An agent may be a Physical Thing (a person or a device), or a Virtual Thing (a person currently performing a particular role, or a computer process). Chains of principal-agent relationship assertions are common, each of which may require Authentication. Figure 5C provides definitions of key terms.

From Clarke (2023b, 2023a)

To lay the foundation for an assessment of the suitability of the conventional approaches to authorization described earlier in this article, the theory reviewed in this section is extended in the following section to encompass Authorization.

This section applies the Pragmatic Metatheoretic Model (PMM) and the Generic Theory of Authentication (GTA), outlined above, and presents a new Generic Theory of Authorization (GTAz). Some aspects of this theory have been presented previously, in Clarke (2023c). Figure 5D defines additional terms. All definitions are reproduced in an associated Glossary (Clarke 2024a).

An Actor may or may not be granted a Permission to perform an Action on a Resource. A process needs to be performed to determine whether or not that will be the case. The term Authorization is used to refer to that process. The entity that makes the determination is the Authorization Authority. The decision has no outcomes other than the recording of the determination for future use.

An Actor that has a Permission needs to be provided with the capability to exercise it. An Entity that can provide that capability needs to receive a request from the Actor, and check the request, and the recorded information about the Actor and its Permissions. If the checks are satisfied, the Entity establishes a Session that enables the Permissions to be exercised. That process is referred to as Access Control.

A comprehensive model of these processes needs to encompass the possibility (and, in practice, likelihood) that an Actor other than the intended Actor may endeavour to take advantage of the Permissions that the intended Actor has been granted. Such an Actor is referred to as an Imposter. Checks undertaken as part of the Operational Phase need to ensure that the intended Actors are provided with a Session and appropriate Permissions, and Imposters are not. The term Masquerade refers to Actions that are performed by an Imposter.

A further category of abuse needs to be recognised and defined. A Permission may be absolute, but in many cases it is qualified in some way. It may apply to all Instances of a Resource, some, or just one (e.g. a single Data-Record). It may apply to any general Purpose (such as maintenance of an organisation's relationships with its customers) or Task (a particular business process such as establishment of a Data-Record for a new customer) or a quite specific Purpose (such as notify customers of an impending change in Terms of Service) or Task (e.g. display of Data-Items relevant to a particular transaction). A Permission Breach occurs if the Actor has broader access than that defined in the Permissions, or performs an Action for a Purpose or Task that is not encompassed by the Permission.

Further developed from Clarke (2023c)

With key underlying concepts defined, a comprehensive process can be described to apply them to the purpose of enabling appropriate users appropriate access to appropriate resources, and denying inappropriate access.

The notion of Identity Management (IdM) was discussed earlier in this article. IdM is intended as a comprehensive architecture for relevant infrastructure and processes. The earlier discussion noted various inadequacies in conventional conceptualisations, models and terminologies. Josang's (2017) Phase Model, reproduced in Figure 2, endeavoured to address many of these inadequacies . Building on Josang's work, and applying the Pragmatic Metatheoretic Model (PMM), Generic Theory of Authentication (GTA) and Generic Theory of Authorization (GTAz) outlined above, this section presents a refinement and further articulation of Josang's model, which is referred to here as Id/Entity Management (Id/EM).

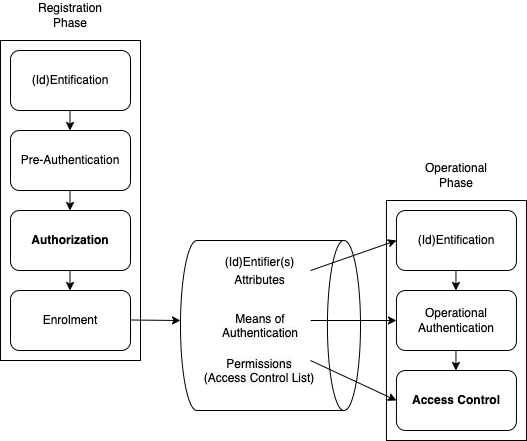

Figure 6 provides a diagrammatic overview of the field as a whole. Within Id/EM, a Registration Phase and an Operational Phase are distinguished.

The Registration Phase is conducted when new, or renewed, Permissions are sought for a new, or renewing, Actor. The Phase comprises four Steps:

The Operational Phase is conducted on each occasion that an Actor seeks to exercise previously-established Permissions. It comprises:

Figure 5E provides definitions of further terms, to complete the exposition of the theoretical model of Authorization, Access Control and Id/Entity Management.

Further developed from Clarke (2023c)

The following section further articulates some aspects of the Id/EM Process that are of particular significance.

Authorization, the third step of the Registration Phase depicted in Figure 6, is a primary focus of this article. Adopting the modified definitions in the IETF and NIST standards proposed in Josang (2007), a clear distinction is drawn between the Authorization process (discussed in this section) and the final step of the Operational Phase, Access Control. Authorization is the process whereby an Authorization Authority decides whether or not to determine that an Actor has one or more Permissions in relation to a particular Resource. A Permission may be specific to an Actor-Instance, or the Actor-Instance may be assigned to a previously-defined Role and inherit Permissions associated with that Role. A Permission may be, and to represent an effective safeguard needs to be, provided for, and only for, a particular Purpose, Use, Function or Task.

Categories of Role include:

The Authorization Authority is commonly the operator of an Information System, as principal, or the operator of an Id/Entity Management service acting as an agent for a principal. Many other possibilities exist, however, such as a regulatory agency, a professional registration board and an individual to whom personal data relates.

An Actor that is assigned Permissions may be any Physical Thing or Virtual Thing, provided that the Actor has the capability to perform relevant Actions in relation to relevant Resources, or alternatively has an agent that can do so on behalf of the Actor. Generally, human Actors have the capability to act. Many artefacts lack suitable actuators, but devices are increasingly being provided with the capability to act. Hence, artefactual Actors are increasingly common.

An Actor may therefore take many forms, in particular:

but not:

Generally, any Actor capable of Action can perform as an agent for a principal. Generally, however, an artefactual Actor cannot act as a principal. This is because legal regimes generally preclude artefacts from bearing responsibility for actions and outcomes, and from liability for harm arising from an action. They cannot be subject to provisions of the criminal law nor be bound by contract. Some commentators have called for such constraints to be removed; but deep-rooted legal assumptions create considerable challenges.

Two broad categories of Resources need to be distinguished, which are subject to distinct forms of Actions:

The Registration Phase paves the way for an Actor to be given the capacity to perform an Action, expressed as the entitlement called a Permission. The Operational Phase may be instigated at any time after Registration is complete, and as many times and as frequently as suits the circumstances. The first step, Id/Entification, exhibits no material differences from the first step in the Registration Phase. The second step, Operational Authentication, takes advantage of the investment undertaken during the Pre-Authentication process, to achieve both effectiveness and efficiency.

The Authenticator(s) used in the Operational Phase may be the same as one or more of those used in the Pre-Authentication step of the Registration Phase. More commonly, however, a purpose-designed arrangement is implemented to enable a quick and convenient process. One approach of long standing is for a 'shared secret' (password, PIN, passphrase, etc.) to be nominated by the User, or provided to the User by the operator. Another mechanism is a one-time password (OTP) provided to the User. This may be delivered just-in-time, via a separate and previously-agreed communications channel. Other currently mainstream approaches involve a one-time password generator that is pre-installed on the User's device(s) by a remote process, or physically delivered to them sufficiently in advance of the Login activity. The earlier steps in the IdEM process enable the third step, Access Control to establish a Session in which the User can exercise the Permissions to which it is entitled.

This section has built on the prior presentations of PMM and GTA, and has presented new bodies of theory relating to Authorization (GTAz) and Id/Entity Management (Id/EM). The remainder of the article assesses the potential value that the theory can deliver to IS practice. The term Id/EM theory is used in the remainder of this document to refer to the combination of the elements described above as PMM, GTA, GTAz and Id/EM. ID/EM theory is a Type V Theory of Design and Action, in terms of the highly-cited classification in Gregor (2006, pp. 628-630).

This article has presented a theory of Id/Entity Management (Id/EM) derived from a Pragmatic Metatheoretic Model of the field addressed by IS practice, extended to embody theoretical treatments of Id/Entities, the Authentication of Assertions, and the critical elements of Authorization and Access Control. The theory has many features that evidence a degree of divergence from conventional Identity Management (IdM) terminology, models and practices. This section considers how the theory can be applied to achieve improvements in the quality of Authorization and Access Control.

No single Industry Standard dominates the IdM field, and no single IdM product or service dominates the market. The approach adopted is to consider several key sources that have had, and continue to have, substantial influence on industry practices. The primary focus is on standards documents that have been available for sufficiently long to have been reflected in contemporary products, services and practices. ISO documents that were reviewed include 'Terminology and Concepts for Identity Management' (ISO/IEC 24760-1 2019) and the associated 'Reference Architecture and Requirements' (ISO/IEC 24760-2 2015), supported by ISO/IEC 27001 2018 and ISO/IEC 29146 2024. Also of consequence is the NIST Special Publication 800-63 on Digital Identity Guidelines (NIST800-63-3 2017), and its three, separately-published Appendices, supplemented by the NIST Standard on Personal Identity Verification (FIPS-201-3 2022). These were complemented by a recent industry group specification, the FIDO Alliance's 'User Authentication Specifications' (FIDO 2018).

The various standards differ considerably in their purposes, approaches, language and structures. This section accordingly adopts a thematic approach, commencing with foundational matters, then considering particular key concepts, and finally architecture and process aspects. The text here is a condensed version of a working paper available at Clarke (2024b).

The Id/EM theory presented in this article is based on express metatheoretic assumptions that clearly distinguish two categories of Real-World Phenomena: Physical Things and Virtual Things, as depicted in Figure 4. That distinction is then carried across into the Abstract World, by means of the conceptual-model elements of Entities and Identities and the data-model elements of Entity Records and Identity Records. Each (Id)Entity has one or more (Id)Entifiers to distinguish (Id)Entity-Instances. Each human Entity presents to the world multiple human Identities. A person who who acts as a prison warder, undercover agent or protected witness is thereby afforded an opportunity to keep that Identity distinct from other Identities, such as householder, parent and football coach.

Conventional IdM practice, on the other hand, is not founded on a carefully-constructed meta-model. A fundamental issue is the conflation of Physical Things with Virtual Things, and Entities with Identities. ISO 24760-1, even after the 2019 revision, defines "identification" as a "process of recognizing an entity" (p. 1, emphases added), and verification as a "process of establishing that identity information ... associated with a particular entity ... is correct" (p. 3, emphases added). This is despite the document having earlier distinguished 'entity' (albeit somewhat confusingly) from 'identity'.

The NIST (2017) treatment of Real-World and Abstract-World elements, and of 'entity' and 'identity', is incomplete, inconsistent and confusing, in several ways. The NIST equivalent to Id/EM's Entity appears to be a (necessarily human) 'subject' or 'party', who progressively adopts the states of an applicant, defined as "A subject undergoing the processes of enrollment and identity proofing" (p. 39); then a claimant, defined as "A subject whose identity is to be verified using one or more authentication protocols" (p. 43); and finally a subscriber, who is "A party who has received a credential or authenticator from a CSP" (p. 55). In terms of Id/EM theory, each of 'applicant', 'claimant' and 'subscriber' is an Abstract-World Role or Identity modelling a Real-World Virtual Thing.

NIST's notion of "the subject's real-life identity" NIST (2017, p. iv), blurs the Real World notion 'subject' with the Abstract World notion 'identity', which is defined by NIST as "an attribute or set of attributes that uniquely describes a subject within a given context" (p. 47). That definition corresponds to the Id/EM definition not for Identity but for either Identifier or Entifier ("a set of Data-Items that are together sufficient to distinguish a particular Id/Entity-Instance from others in the same category"). The layers of inconsistencies in usage create confusions, and ensure mutually incompatible implementations. Similar problems beset FIPS-201-3 (2022), the US government's Standard for Personal Identity Verification. It defines the Abstract-World notion of identity by reference to the Real World: "The set of physical and behavioral characteristics by which an individual is uniquely recognizable" (FIPS-201-3, p. 98, emphases added).

Standards and associated practices also feature mis-handling of relationship cardinality. Entities generally have multiple Identities, and Identities may be performed by multiple Entities, both serially and simultaneously. On the other hand, it is challenging to interpret the intentions of NIST (2017) regarding the cardinality of the relationship between 'subject' (presumably equivalent to Entity) and 'identity' (related to, but different from, Identity). In a single passage, the NIST document acknowledges the existence of 1-to-many relationships: "a subject can represent themselves online in many ways ..." (p. iv). Generally, however, the document appears to implicitly assume a one-to-one relationship -- i.e. that a Real-World human Physical Thing can only have a single Abstract-World Identity, and an Identity is only adopted by one human. NIST (2022), meanwhile, is concerned specifically with US federal employees and contractors, and does not appear to contemplate the possibility of anything other than a 1:1 relationship between an identity and an entity. This implicit assumption maps poorly to the needs of many government agencies.

Confusion is also evident in relation to industry Standards' scope of applicability. NIST (2017) declares its scope as being humans only: "The guidelines cover identity proofing and authentication of users (such as employees, contractors, or private individuals) interacting with government ICT systems over open networks" (p. iii). It wanders from this commitment, however. Its definition of authentication is "verifying the identity of a user, process, or device ..." (p. 41, emphasis added). It is clear that the undefined term 'user' is intended to refer only to humans, in that the examples provided are "employees, contractors, or private individuals" (p. iii) and "employees and contractors" (p. 4). In fact, the contexts of all of the 48 occurrences of the term 'authentication' are consistent with the interpretation that computing devices and processes running in them are not within-scope -- e.g. "this revision of these guidelines does not explicitly address device identity", and "specific requirements for issuing authenticators to devices when they are used in authentication protocols with people" are also excluded (p. 5). Such inconsistencies in the content of a Standard are bound to lead to variations in interpretations and implementations.

A further aspect of Id/EM theory is its application beyond IS Resources to encompass Physical Resources and Actions in the Real World. The various Standards documents, however, are much narrower in scope. For example, NIST (2017) defines access as being "To make contact with one or more discrete functions of an online, digital service" (p. 55, emphasis added), and such examples as are provided are limited to Data and Processes.

In addition, whereas Id/EM theory contemplates Malbehaviours, distinguishing Masquerade by an Imposter and Permission Breach, NIST (2017) merely mentions 'masquerade' and 'attack', but implicitly assumes that safeguards are successful, and hence the model does not encompass imposters within the system. There is also no notion of limitation of the purpose, use, task or function being performed by an authorized user, and hence Permission Breach is also out-of-scope of the NIST guidance.

In Id/EM theory, Authentication is a process that uses Evidence to establish a degree of confidence in the reliability of an Assertion. Id/EM theory avoids the naive epistemological assumption of 'accessible truth' in favour of a relativistic interpretation, with degrees of reliability (or of 'strength', as security theory commonly expresses it). Truth / verification / proof / validation notions are applicable within tightly-defined mathematical models, but not in the Real World in which identity management is applied. Given the complexities inherent in schemes that inter-relate humans and artefacts, asocio-technical perspectives are essential to understanding and to effective analysis and design of IS.

In ISO 24760-1, "verification" is defined as "process of establishing that identity information ... associated with a particular entity ... is correct" and "authentication is defined as "formalized process of verification ... that, if successful, results in an authenticated identity ... for an entity"(p. 3, emphases added), and 'identity proofing' (synonym: 'initial entity authentication') is defined as "verification ... based on identity evidence ..." (p. 5, emphases added). NIST (2017) is similarly strongly committed of truth-related notions, including 'proofing' and 'determination of validity'. The string 'verif' occurs more than 100 times in the document's 60 pp. The implication is that something approaching infallibility is achievable.

Id/EM theory has conceptual clarity as an objective, with careful attention paid to details concerning each element, and with the relationship of each element with other elements. It also strives to provide a comprehensive set, such that the terminology includes a defined term for each relevant concept. In conventional IdM, on the other hand, many key terms evidence fuzziness. For example, within the ISO 24760-1 standard, the definition of 'identification' as "process of recognizing an entity ... in a particular domain" (p. 3) make no reference to the defined term 'identifier', and is immediately followed by "Note 1 to entry: The process of identification applies verification ..." (p. 3). Given that 'verification' is defined as "process of establishing that identity information ... associated with a particular entity is correct" (p. 3), the definitions not only conflate the notions of Identity and Entity but also the processes of Identification and Authentication.

In Id/EM theory, Credential means an Authenticator that carries the imprimatur of an Authentication Authority. This is consistent with dictionary definitions of the term. The IETF sent the industry down an inappropriate path, defining the term as "A data object that is a portable representation of the association between an identifier and a unit of authentication information" (IETF 2007, p. 84). This is consistent with ICT industry usage of '(virtual) token', rather than with the notion of Credential.

The ISO 24760-1 standard invites confusion in this area. Despite defining the term 'evidence of identity', the document fails to refer to it when it defines credential, which is said to be a "representation of an identity ... for use in authentication ... A credential can be a username, username with a password, a PIN, a smartcard, a token, a fingerprint, a passport, etc." (p. 4). This muddles all of Evidence, Entity, Identity, Attribute, Identifier and Entifier, and omits any sense of a Credential being evidence of high reliability, having been issued or warranted by a Credential Authority. Early versions of NIST's guidance adopted the same approach to 'credential' as IETF. The confusion between credential and token was acknowledged in 2017, but the new version does little to clear the fog, and it omits the notion of an authority. The Id/EM theory definition of a Token as "a recording medium on which useful Data is stored ..." is also the subject of confused and confusing expression in all of IETF (2007), NIST (2017) and ISO 24760-1.

Authorization is defined in Id/EM theory as a process whereby an Authorization Authority decides whether or not to declare that an Actor has one or more Permissions in relation to a particular IS Resource or Physical Resource. Authorization is clearly distinguished from Access Control, which is defined as "a process that utilises previously recorded Permissions to establish a Session that enables a User to exercise the appropriate Permissions".

All of the Standards are internally inconsistent in their usages of these terms, sometimes using them as synonyms, e.g. "Access control or authorization is the decision to permit or deny a subject access to system objects (network, data, application, service, etc.)" (NIST800-162 2014, p. 2, emphasis added). Not only is there a lack of clarity as to whether it is a process, or the end-point of a process, i.e. a decision, but 'an authorization' is also sometimes used to refer to the result of or output from the process.

IdEM theory reflects the circumstances prevalent since c.1990, whereby participants in IS may be within a single organisations, across multiple organisations, or external to any organisation. Its definition of Role is accordingly "a coherent pattern of behaviour performed in a particular context", which avoids limiting the Role that an Id/Entity adopts to an organisational position or function.

The IETF Glossary of 2000, even after revision in 2007, defines role to mean "a job function or employment position" (IETF 2007, p. 254). The NIST exposition on ABAC also adopts the narrow view that "a role has no meaning unless it is defined within the context of an organization" (NIST800-162 2014, p. 26). Further, although the document suggests that ABAC supports "arbitrary attributes of the user and arbitrary attributes of the [IS resource]" (p. vii), the only examples provided for actors' attributes in the entire 50-page document are position descriptions internal to an organisation: "a Nurse Practitioner in the Cardiology Department" (pp. viii, 10), "Non-Medical Support Staff" (p.10). Moreover, an assumption implicit in many interpretations of the NIST document is that a one-to-one relationship exists between organisational identity and organisational role. This denies an employee the ability to gain Permissions relevant to additional functions such as a fire warden, mentor to a junior assistant, and member of an interview panel.

Id/EM theory defines a Permission as (a) an entitlement granted (b) to an Id/Entity-Instance (c) to be provided with the capability to perform a specified Action (d) in relation to a specified IS Resource or Physical Resource, and (e) for a particular Purpose, Use, Function or Task. This is a relatively expansive notion. All Standards documents, on the other hand, are limited to the notions inherent in Identity-based (IBAC) and Role-based (RBAC) models. As a result, contemporary practice largely fails to fulfil the needs of organisations to implement 'the need-to-know principle' and the 'principle of least privilege', and to achieve compliance with longstanding provisions of data protection laws.

A final area of consideration is the overall architecture and process flow. Id/EM theory includes a generic process model, depicted in Figure 6, which distinguishes two Phases, of respectively four and three steps, with Data stored in an Account intermediating between the core Authorization and Access Control activities, and with all terms defined clearly and consistently, thereby minimising syntactic and semantic ambiguities.

Depictions in industry Standards and in descriptions of products and services exhibit many variants and inconsistencies. As evidenced by the diagrammatic version of the Digital Identity Model in Figure 1, the NIST document provides limited guidance about the process. The textual description goes somewhat further towards a process definition, saying "the left side of the diagram shows the enrollment, credential issuance, lifecycle management activities, and various states of an identity proofing and authentication process" and "the right side of [the diagram] shows the entities and interactions involved in using an authenticator to perform digital authentication" (pp. 10-11). The preliminary phase is referred to as Enrollment and Identity Proofing, and the second phase is called Digital Authentication, but there is limited clarification of the phases' sub-structure (pp. 10-12).

This section has provided a brief scan of industry Standards in light of the theory proposed in this paper. Id/EM theory enables the detection of conceptual inadequacies and conflations, and textual ambiguities and imprecisions. Further, it provides a suite of terms and definitions, situated within a coherent and comprehensive model and associated terminology. Together, these features enable not only constructive critiqueof existing Standards and guidance documents, but also adaptation of them to overcome their current deficiencies and support consistent and hence mutually compatible implementations.

The purpose of the research reported in this article was to contribute to improved IS practice and practice-oriented IS research in relation to authorization and access control processes, within the broader context of identity management. The analysis has demonstrated that conventional theory relating to Identity Management (IdM) embodies inadequate modelling of the relevant real-world phenomena, internal inconsistencies, unhelpful terms, and conceptual and definitional confusion. By applying an extended version of a previously-published pragmatic metatheoretic model (PMM), those inadequacies and inconsistencies can be overcome.

The practice of IdM since 2000 has been undermined by many flaws in the underlying theory. The replacement Id/EM framework defined in this article provides a reference-point against which existing practices and designs can be reviewed, and consideration given to adaptations that will address their weaknesses. To the extent that practices and designs are not capable of adaptation, the replacement theory supports the alternative approach of quickly and cleanly conceiving and implementing replacement products and services. Developments of such kinds would bring with them the opportunity for upgrading or replacement of defective standards, both internationally (ISO/IEC) and nationally (e.g. NIST/FIPS).

The benefits of substantial changes in this field would accrue to all stakeholders. Organisations can achieve greater effectiveness in their operations, better manage business risks, and operate more efficiently, by authenticating the Assertions that matter. Individuals will be relieved of inconveniences and intrusions that are unnecessary or disproportionate to the need, and subjected to only the effort, inconvenience, costs and intrusions that are justified by the nature of their interactions and dependencies. For this outcome to be achieved, this research needs to be applied in the field, and the theory used as a lens by theorists, standards-producers, public policy organisations, designers and service-providers.

Aboukadri S., Ouaddah A & Mezrioui A. (2024) 'Machine learning in identity and access management systems: Survey and deep dive' Computers & Security 139 (2024) 103729

Baumer E.P.S. (2015) 'Usees' Proc. 33rd Annual ACM Conference on Human Factors in Computing Systems (CHI'15), April 2015, at http://ericbaumer.com/2015/01/07/usees/

Berleur J. & Drumm J. (Eds.) (1991) 'Information Technology Assessment' Proc. 4th IFIP-TC9 International Conference on Human Choice and Computers, Dublin, July 8-12, 1990, Elsevier Science Publishers (North-Holland), 1991

Clarke R. (1992a) 'Extra-Organisational Systems: A Challenge to the Software Engineering Paradigm' Proc. IFIP World Congress, Madrid, September 1992, PrePrint at http://www.rogerclarke.com/SOS/PaperExtraOrgSys.html

Clarke R. (1992b) 'Practicalities of Keeping Confidential Information on a Database With Multiple Points of Access: Technological and Organisational Measures' Invited Paper for a Seminar of the Independent Commission Against Corruption (ICAC) of the State of N.S.W. on 'Just Trade? A Seminar on Unauthorised Release of Government Information', Sydney Opera House, 12 October 1992, at http://rogerclarke.com/DV/PaperICAC.html

Clarke R. (1994a) 'The Digital Persona and Its Application to Data Surveillance' The Information Society 10,2 (June 1994), PrePrint at http://www.rogerclarke.com/DV/DigPersona.html

Clarke R. (1994b) 'Human Identification in Information Systems: Management Challenges and Public Policy Issues' Information Technology & People 7,4 (December 1994) 6-37, PrePrint at http://www.rogerclarke.com/DV/HumanID.html

Clarke R. (2002a) 'Why Do We Need PKI? Authentication Re-visited' Proc. 1st Annual PKI Research Workshop, at NIST, Gaithersburg MD, April 24-25, 2002, at http://rogerclarke.com/EC/PKIRW02.html

Clarke R. (2002b) 'e-Consent: A Critical Element of Trust in e-Business' Proc. 15th Bled Electronic Commerce Conference, Bled, Slovenia, June 2002, PrePrint at http://www.rogerclarke.com/EC/eConsent.html

Clarke R. (2004) 'Identity Management: The Technologies, Their Business Value, Their Problems, Their Prospects' Xamax Consultancy Pty Ltd, , March 2004, ISBN 0-9589412-3-8, 66pp., at http://www.xamax.com.au/EC/IdMngt.html

Clarke R. (2009) 'A Sufficiently Rich Model of (Id)entity, Authentication and Authorisation' Proc. IDIS 2009 - The 2nd Multidisciplinary Workshop on Identity in the Information Society, LSE, London, 5 June 2009, at http://www.rogerclarke.com/ID/IdModel-090605.html

Clarke R. (2014) 'Promise Unfulfilled: The Digital Persona Concept, Two Decades Later' Information Technology & People 27, 2 (Jun 2014) 182-207, PrePrint at http://www.rogerclarke.com/ID/DP12.html

Clarke R. (2019) 'Risks Inherent in the Digital Surveillance Economy: A Research Agenda' Journal of Information Technology 34,1 (Mar 2019) 59-80, PrePrint at http://www.rogerclarke.com/EC/DSE.html

Clarke R. (2021) 'A Platform for a Pragmatic Metatheoretic Model for Information Systems Practice and Research' Proc. Austral. Conf. Infor. Syst, December 2021, PrePrint at http://rogerclarke.com/ID/PMM.html

Clarke R. (2022) 'A Reconsideration of the Foundations of Identity Management' Proc. Bled eConference, June 2022, PrePrint at http://rogerclarke.com/ID/IDM-Bled.html

Clarke R. (2023a) 'The Theory of Identity Management Extended to the Authentication of Identity Assertions' Proc. 36th Bled eConference, June 2023, PrePrint at http://rogerclarke.com/ID/IEA-Bled.html

Clarke R. (2023b) 'How Confident are We in the Reliability of Information? A Generic Theory of Authentication to Support IS Practice and Research' Proc. ACIS'23, December 2023, PrePrint at http://rogerclarke.com/ID/PGTA.html

Clarke R. (2024a) 'A Pragmatic Model of (Id)Entity Management (IdEM) -- Glossary' Xamax Consultancy Pty Ltd, April 2024, at http://rogerclarke.com/ID/IDM-G.html

Clarke R. (2024b) 'A Generic Theory of Authorization, Access Control and Id/Entity Management -- Annex: Application of the Theory to Maintream Standards' Xamax Consultancy Pty Ltd, May 2023, at http://rogerclarke.com/ID/GT-IdEM.html

Coiera E. & Clarke R. (2002) 'e-Consent: The Design and Implementation of Consumer Consent Mechanisms in an Electronic Environment' J Am Med Inform Assoc 11,2 (Mar-Apr 2004) 129--140, at https://www.ncbi.nlm.nih.gov/pmc/articles/PMC353020/

Cuellar M.J. (2020) 'The Philosopher's Corner: Beyond Epistemology and Methodology - A Plea for a Disciplined Metatheoretical Pluralism' The DATABASE for Advances in Information Systems 51, 2 (May 2020) 101-112

Davis G.B. (1974) 'Management Information Systems: Conceptual Foundations, Structure, and Development' McGraw-Hill, 1974

FIDO (2022) 'User Authentication Specifications Overview' FIDO Alliance, 8 December 2022, at https://fidoalliance.org/specifications/

FIPS-201-3 (2022) 'Personal Identity Verification (PIV) of Federal Employees and Contractors' [US] Federal Information Processing Standards, January 2022, at https://nvlpubs.nist.gov/nistpubs/FIPS/NIST.FIPS.201-3.pdf

Fischer-Huebner S. (2001) 'IT-Security and Privacy: Design and Use of Privacy-Enhancing Security Mechanisms' LNCS Vol. 1958, Springer, 2001, at https://link.springer.com/content/pdf/10.1007/3-540-45150-1.pdf?pdf=button

Fischer-Huebner S. & Lindskog H. (2001) 'Teaching Privacy-Enhancing Technologies' Proc. IFIP WG 11.8 2nd World Conf. on Information Security Education, Perth, Australia

Gregor S. (2006) 'The Nature of Theory in IS' MIS Quarterly 30,3 (September 2006) 611-642

IETF (2000) 'Internet Security Glossary', Internet Engineering Task Force, RFC2828, May 2000, at http://www.ietf.org/rfc/rfc2828.txt

IETF (2007) 'Internet Security Glossary, Version 2', Internet Engineering Task Force, RFC4949, August 2007, at http://www.ietf.org/rfc/rfc4949.txt

ISO 22600-1:2014 'Health informatics Å\ Privilege management and access control Å\ Part 1: Overview and policy management' International Standards Organisation TC 215 Health informatics, 2014

ISO 22600-2:2014 'Health informatics Å\ Privilege management and access control Å\ Part 2: Formal models' International Standards Organisation TC 215 Health informatics, 2014

ISO/IEC 24760-1 (2019) 'A Framework for Identity Management -- Part 1: Terminology and concepts' International Standards Organisation SC27 IT Security techniques, 2019, at https://standards.iso.org/ittf/PubliclyAvailableStandards/c077582_ISO_IEC_24760-1_2019(E).zip

ISO/IEC 24760-2 (2017) 'A Framework for Identity Management -- Part 2: Reference architecture and requirements' International Standards Organisation SC27 IT Security techniques, 2017

ISO/IEC 27001 (2018) 'Information technology Å\ Security techniques Å\ Information security management systems Å\ Overview and vocabulary' International Standards Organisation, 2018, at https://standards.iso.org/ittf/PubliclyAvailableStandards/c073906_ISO_IEC_27000_2018_E.zip

ISO/IEC 24760-3 (2019) 'A Framework for Identity Management -- Part 3: Practice' International Standards Organisation SC27 IT Security techniques, 2019

ISO/IEC 29146 (2024) 'Information technology Å\ Security techniques Å\ A framework for access management' International Standards Organisation, 2024, at https://www.iso.org/obp/ui/en/#iso:std:iso-iec:29146:ed-2:v1:en

ITG (2023) 'List of Data Breaches and Cyber Attacks' IT Governance Blog, monthly, at https://www.itgovernance.co.uk/blog/category/monthly-data-breaches-and-cyber-attacks

Iyer P., Masoumzadeh A. & Narendran P. (2021) 'On the Expressive Power of Negated Conditions and Negative Authorizations in Access Control Models' Computers & Security 116 (May 2022) 102586

Josang A. (2017) 'A Consistent Definition of Authorization' Proc. Int'l Wksp on Security and Trust Management, 2017, pp 134--144

Josang A. & Pope S. (2005) 'User Centric Identity Management' Proc. Conf. AusCERT, 2005, at https://citeseerx.ist.psu.edu/document?repid=rep1&type=pdf&doi=58c591293f05bb21aa19d71990dbdda642fbf99a

Karjoth G., Schunter M. & Waidner M. (2002) 'Platform for Enterprise Privacy Practices: Privacy-enabled Management of Customer Data' Proc. 2nd Workshop on Privacy Enhancing Technologies, Lecture Notes in Computer Science. Springer Verlag, 2002, at https://citeseerx.ist.psu.edu/document?repid=rep1&type=pdf&doi=9938422ed2c8b8cb045579f616e21f18b89c8e36

Karyda M. & Mitrou L. (2016) 'Data Breach Notification: Issues and Challenges for Security Management' Proc. 10th Mediterranean Conf. on Infor. Syst., Cyprus, September 2016, at https://www.researchgate.net/profile/Maria-Karyda/publication/309414062_DATA_BREACH_NOTIFICATION_ISSUES_AND_CHALLENGES_FOR_SECURITY_MANAGEMENT/links/580f4b4608aef2ef97afc0b2/DATA-BREACH-NOTIFICATION-ISSUES-AND-CHALLENGES-FOR-SECURITY-MANAGEMENT.pdf

Li N., Mitchell J.C. & Winsborough W.H. (2002) 'Design of a Role-based Trust-management Framework' IEEE Symposium on Security and Privacy, May 2002, pp. 1-17, at https://web.cs.wpi.edu/~guttman/cs564/papers/rt_li_mitchell_winsborough.pdf

Lupu E. & Sloman M. (1997) 'Reconciling Role Based Management and Role Based Access Control' Proc. ACM/NIST Workshop on Role Based Access Control, 1997, pp. 135-141, at https://dl.acm.org/doi/pdf/10.1145/266741.266770

Michael M.G. & Michael K. (2014) 'Uberveillance and the Social Implications of Microchip Implants: Emerging Technologies' IGI Global, 2014, at http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.643.3519&rep=rep1&type=pdf

M?hle A., Gr?ner A., Gayvoronskaya T. & Meinel C. (2018) 'Survey on Essential Components of a Self-Sovereign Identity' Computer Science Review, 2018, at https://arxiv.org/pdf/1807.06346

Myers M.D. (2018) 'The philosopher's corner: The value of philosophical debate: Paul Feyerabend and his relevance for IS research' The DATA BASE for Advances in Information Systems 49, 4 (November 2018) 11-14

NIST800-63-3 (2017) 'Digital Identity Guidelines' National Institute of Standards and Technology, 2017, at https://doi.org/10.6028/NIST.SP.800-63-3

NIST800-63-3A (2017) 'Digital Identity Guidelines: Enrollment and Identity Proofing' National Institute of Standards and Technology, 2017, at https://doi.org/10.6028/NIST.SP.800-63a

NIST800-63-3B (2017) 'Digital Identity Guidelines: Authentication and Lifecycle Management' National Institute of Standards and Technology, 2017, at https://doi.org/10.6028/NIST.SP.800-63b

NIST800-63-3C (2017) 'Digital Identity Guidelines: Federation and Assertions' National Institute of Standards and Technology, 2017, at https://doi.org/10.6028/NIST.SP.800-63bc

NIST800-63-4 (2022) 'Digital Identity Guidelines: Initial Public Draft' Special Publication SP 800-63-4 ipd, US National Institute of Standards and Technology, December 2022, at https://doi.org/10.6028/NIST.SP.800-63-4.ipd

NIST800-162 (2014) 'Guide to Attribute Based Access Control (ABAC) Definition and Considerations' NIST Special Publication 800-162, National Institute of Standards and Technology, updated to February 2019, at https://nvlpubs.nist.gov/nistpubs/SpecialPublications/NIST.SP.800-162.pdf

Pernul G. (1995) 'Information Systems Security -- Scope, State-of-the-art and Evaluation of Techniques' International Journal of Information Management 15,3 (1995) 165-180

Pfitzmann A. & Hansen M. (2006) 'Anonymity, Unlinkability, Unobservability, Pseudonymity, and Identity Management Å\ A Consolidated Proposal for Terminology' May 2009, at https://citeseerx.ist.psu.edu/document?doi=76296a3705d32a16152875708465c136c70fe109

Pritee Z.T., Anik M.H., Alam S.B., Jim R.J., Kabir M.M. & Mridha M.F. (2024) 'Machine learning and deep learning for user authentication and authorization in cybersecurity: A state-of-the-art review' Computers & Security 140 (2024) 103747

Sandhu R.S., Coyne E.J., Feinstein H.L. & Youman C.E. (1996) 'Role-Based Access Control Models' IEEE Computer 29,2 (February 1996) 38-47, at https://csrc.nist.gov/csrc/media/projects/role-based-access-control/documents/sandhu96.pdf

Schlaeger C., Priebe T., Liewald M. & Pernul G. (2007) 'Enabling Attribute-based Access Control in Authentication and Authorisation Infrastructures' Proc. Bled eConference, June 2007, pp. 814-826

Sindiren E. & Ciylan B. (2019) 'Application model for privileged account access control system in enterprise networks' Computers & Security 83 (June 2019) 52-67

Stallings W. & Brown L. (2015) 'Computer Security: Principles and Practice' Pearson, 3rd Ed., 2015

Thomas R.K. & Sandhu R.S. (1997) 'Task-based Authorization Controls (TBAC): A Family of Models for Active and Enterprise-oriented Authorization Management' Proc. IFIP WG11.3 Workshop on Database Security, Lake Tahoe Cal., August 1997, at https://profsandhu.com/confrnc/ifip/i97tbac.pdf

Zhao J. & Su Q. (2024) 'A threshold traceable delegation authorization scheme for data sharing in healthcare' Computers & Security 139 (2024) 103686ANSI (2012) 'Information Technology - Role Based Access Control' INCITS 359-2012, American National Standards Institute, 2012

Zhong J., Bertok P., Mirchandani V. & Tari Z. (2011) 'Privacy-Aware Granular Data Access Control For Cross-Domain Data Sharing' Proc. Pacific Asia Conf. Infor. Syst. 2011, 226

This paper builds on prior publications by the author on the topic of identity authentication, including Clarke (1994b, 2004, 2009), and several specific contributions in relation to PMM and GTA, in papers cited within the article. An early version of the theory in sections 3-6 of this article was presented at ACIS'23, Wellington, in December 2023. This much-expanded article benefited considerably from the comments and questions of the formal reviewers of the previous papers, the participants in the presentation sessions, and comments offered by my colleagues Malcolm Crompton, Ross Oakley, Ron Weber and Stephen Wilson.

Roger Clarke is Principal of Xamax Consultancy Pty Ltd, Canberra. He is also a Visiting Professorial Fellow associated with UNSW Law & Justice, and a Visiting Professor in the School of Computing at the Australian National University.

| Personalia |

Photographs Presentations Videos |

Access Statistics |

|

The content and infrastructure for these community service pages are provided by Roger Clarke through his consultancy company, Xamax. From the site's beginnings in August 1994 until February 2009, the infrastructure was provided by the Australian National University. During that time, the site accumulated close to 30 million hits. It passed 65 million in early 2021. Sponsored by the Gallery, Bunhybee Grasslands, the extended Clarke Family, Knights of the Spatchcock and their drummer |

Xamax Consultancy Pty Ltd ACN: 002 360 456 78 Sidaway St, Chapman ACT 2611 AUSTRALIA Tel: +61 2 6288 6916 |

Created: 23 March 2023 - Last Amended: 2 June 2024 by Roger Clarke - Site Last Verified: 15 February 2009

This document is at www.rogerclarke.com/ID/GT-IdEM.html

Mail to Webmaster - © Xamax Consultancy Pty Ltd, 1995-2022 - Privacy Policy