Roger Clarke's Web-Site

© Xamax Consultancy Pty Ltd, 1995-2024

Infrastructure

& Privacy

Matilda

Roger Clarke's Web-Site© Xamax Consultancy Pty Ltd, 1995-2024 |

|

|||||

| HOME | eBusiness |

Information Infrastructure |

Dataveillance & Privacy |

Identity Matters | Other Topics | |

| What's New |

Waltzing Matilda | Advanced Site-Search | ||||

Notes in preparation for a Distinguished Lecture to the

Young

Southeast Asia Leaders Initiative (YSEALI)

of

Fulbright

University Vietnam, on 7 June 2022

Version of 6 June 2022

© Xamax Consultancy Pty Ltd, 2022

Available under an AEShareNet ![]() licence or a Creative

Commons

licence or a Creative

Commons  licence.

licence.

This document is at http://rogerclarke.com/EC/AIYV.html

The accompanying slide-set is at http://rogerclarke.com/EC/AIYV.pdf

The original conception of artificial intelligence (old AI) was as a simulation of human intelligence. That has proven to be an ill-judged quest. In addition to leading too many researchers repetitively down too many blind alleys, the idea embodies far too many threats to individuals, societies and economies. After 70 years, it's time to redefine the field. A review of the original conception, operational definitions and important examples points to replacement notions of complementary artefact intelligence and hence augmented intelligence (new AI). However, combining matters of intellect with action leads to a broader conception of greater potential value. Young leaders of today need to manage technology and organisations towards complementary artefact capability and the real target: augmented capability (AC).

To understand the present and near future, we need to have a sufficiently clear picture of the relevant past. With this in mind, Professor Mariano invited me to take a long view of Artificial Intelligence (AI) and associated technologies, and their impacts.

I'll commence by going back to AI as it was originally conceived, present an operational definition, outline a range of ways in which AI has been embodied, and highlight a couple of key ways in which software development using AI techniques differs from prior approaches. That will provide a basis for the identification of generic threats that AI harbours, and broad areas in which it is capable of seriously negative impacts, on individuals, on the economy, on societies, and hence on organisations. I'll conclude by proposing that a very different conception of 'AI' is already emergent, which will be far more likely to serve the need.

The AI notion was based on

"the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it" (McCarthy et al. 1955)

"The hypothesis is that a physical symbol system [of a particular kind] has the necessary and sufficient means for general intelligent action" (Simon - variants in 1958, 1969, 1975 and 1996, p.23)

The reference-point was human intelligence, and the intention was to create artificial forms of human intelligence using a [computing] machine.

In a surprisingly short time, the 'conjecture' developed into a fervid belief, e.g.:

"Within the very near future - much less than twenty-five years - we shall have the technical capability of substituting machines for any and all human functions in organisations. ... Duplicating the problem-solving and information-handling capabilities of the brain is not far off; it would be surprising if it were not accomplished within the next decade" (Simon 1960)

Herbert Simon (1916-2001) ceased sullying his strong reputation with such propositions two decades ago; but disciples have kept the belief alive:

"By the end of the 2020s [computers will have] intelligence indistinguishable to biological humans" (Kurzweil 2005, p.25)

So virile is the AI meme that it has been attempting to take over the entire family of information, communication and engineering technologies. Some people are now using the term for any kind of software, developed using any kind of technique, and running in any kind of device. There has also been some blurring of the distinction between AI software and the computing or computer-enhanced device in which it is installed.

Yet AI has had an unhappy existence. It has blown hot and cold through summers and winters, as believers have multiplied, funders have provided resources, funders have become disappointed by the very limited extent to which AI's proponents delivered on their promises, and many believers have faded away again. One major embarrassment was the failure of Japan's much-vaunted 'Fifth Generation Project', a decade-long failure that commenced in 1982 (Pollack 1992).

One of the adaptations by AI practitioners has been to divorce themselves from the grand challenge that the original conception represented. They refer to the original notion as 'artificial general intelligence' or 'strong AI', which 'aspired to' replicate human intelligence, and instead adopt the stance that their work is 'inspired by' it.

The last decade has seen a prolonged summer for AI technologists and promoters, who have attracted many investors. But it's autumn down here in Australia, and I see the current euphoria about AI as also being in its autumn, facing another hard winter ahead. With so much enthusiasm, and so many bold predictions about the promise of AI, why am I so dubious? Let's follow through a review of some of the many, tangled meanings people attribute to the term Artificial Intelligence, and see where it leads us.

People working in AI have had successes. These include techniques for pattern recognition in a wide variety of contexts, including in aural signals representing sound, and images representing visual phenomena. The application of these techniques have involved the engineering of products, leading to more grounded explanations of how to judge whether an artefact is or is not a form of AI.

Here's an interpretation of the most useful attempts I've seen at an operational definition of AI. I stress that this is a paraphrase of multiple sources (including Albus 1991, Russell & Norvig 2003, McCarthy 2007) rather than a direct quotation.

Intelligence is exhibited by an artefact if it:

(1) evidences perception and cognition of relevant aspects of its environment;

(2) has goals; and

(3) formulates actions towards the achievement of those goals;

but also (for some commentators at least):

(4) implements those actions.

A complementary approach to understanding what AI is and does, and what it is not and does not do, is to consider the kinds of artefacts that have been used in an endeavour to deliver it. The obvious category of artefact is computers. The notion goes back to the industrial era, in the first half of the 19th century, with Charles Babbage as engineer and Ada Lovelace as the world's first programmer. We're all familiar with the explosion in innovation associated with electronic digital computers that commenced about 1940 and is still continuing.

Computers were intended for computation. However, capabilities necessary to support computation are capable of supporting processes that have other kinds of objectives.

A program is generally written with a purpose in mind, thereby providing the artefact with something resembling goals - attribute (2) of the operational definition.

They can be applied to assist in the 'formulation of actions' - attribute (3) of the operational definition.

They can even be used to achieve a kind of 'cognition', if only in the limited sense of categorisation based on pattern-similarity - attribute (1b).

Adding 'sensors' that gather or create data representing the device's surroundings provides at least a primitive form of 'perception' of the real world - attribute (1a).

So is not difficult to contrive a simple demonstration of a computer that can be argued to satisfy the shorter version of the operational definition of AI.

A computer can be extended by means of 'actuators' of various kinds to provide it with the capacity to act on the real world. The resulting artefact is both 'a computer that does' and 'a machine that computes', and hence what we commonly call a 'robot'. The term was invented for a play (Capek 1923), and has been much used in science fiction, particularly by Isaac Asimov during the period 1940-85 (Asimov 1968, 1985). Industrial applications became increasingly effective in structured contexts from about 1960. Drones are flying robots, and submersible drones are swimming robots.

Other categories of artefact can support software that might satisfy the definition of AI. These include everyday things, such as bus-stops. They now have effectors in the form of display panels, with the images selected or devised to sell something to someone. Their sensors may be cameras to detect and analyse faces, or detectors of personal devices that enable access to data about the device-carriers' attributes.

Another category is vehicles. My car is a 1994 BMW M3, which was an early instance of a vehicle whose fuel input, braking and suspension were supported by an electronic control unit. A quarter-century later, vehicles have far more layers of automation intruding into the driving experience. And of course driverless vehicles depend on software performing much more abstract functions than adapting fuel mix.

For many years, sci-fi novels and feature films have used as a stock character a robot that is designed to resemble humans, referred to as a humanoid. From time to time, it is re-discovered that greater rapport is achieved between a human and a device if the device evidences feminine characteristics. Whereas the notion 'humanoid' is gender-neutral, 'android' (male) and 'gynoid' (female) are gender-specific. The English translation of Capek's play used 'robotess' and more recent entertainments have popularised 'fembot'. This notion was central to an early and much-celebrated AI, called Eliza (Weizenbaum 1966). Household-chore robots, and the voices in digital assistants in the home and car, commonly embody gynoid attributes. The recent feature films 'Her' (2013) and 'Ex Machina' (2014) delve into further possibilities. These ideas challenge various assumptions about the nature of humanity, and of gender.

A promising, and challenging, category of relevance is cyborgs - that is to say, humans whose abilities are augmented with some kind of artefact, as simple as a walking-stick, or with simple electronics such as a heart pacemaker or a health-condition alert mechanism. As more powerful and sophisticated computing facilities and software are installed, the prospect exists of AI integrated into a human, and guiding or even directing the human's effectors.

A variety of the real examples that have been discussed can be argued to satisfy elements of the definition of AI. Particularly strong cases exist for industrial robots, and driverless vehicles on rails, in dedicated bus-lanes and in mines. In the case of Mars buggies, the justification is not just economic but also because the signal-latency in Earth-with-Mars communications (about 20 minutes) precludes effective operation by an Earth-bound 'driver'. These examples pass the test, because there is evidence of:

However, it requires very charitable interpretation to argue that there is evidence of the second-order intellect that we associate with human intelligence:

To get to grips with AI, it's necessary to consider the different ways in which the intellectual aspects of artefact behaviour are brought into being. During the first 5-6 decades of the information technology era, software was developed using procedural or imperative languages. These involve the expression of a solution, and that require a clear (even if not explicit) definition of a problem (Clarke 1991). This depends on genuinely-algorithmic languages, by which is meant that the program comprises a finite sequence of well-defined instructions, including selection and iteration constructs.

Research within the AI field has variously delivered and co-opted a variety of techniques. One of particular relevance is rule-based 'expert systems'. Importantly, a rule-set does not recognise a problem or a solution, but just what is usefully referred to as 'a problem-domain', that is to say, a space within which cases arise. The idea of a problem is merely a perception by people who are applying some kind of value-system when they observe what goes on in the space.

Another relevant software development technique is AI/ML (machine-learning) and its most widely-discussed form, artificial neural networks (ANN). The original conception of AI referred to learning as a primary feature of (human) intelligence. The ANN approach, however, adopts a narrow focus on data that represents instances. There is not only no sense of problem or solution, but also no model of the problem-domain. There's just data. It is implicitly assumed that the world can be satisfactorily understood, and decisions made, and actions taken, on the basis of the similarity of a new case to the set of cases that have previously been reflected in the software.

This is 'empirical'. It is not algorithmic. It is also not scientific in the sense of providing a coherent, systematic explanation of the behaviour of phenomena. Science requires theory about the world, directed empirical observation, and feedback to correct, refine or replace the theory. AI/ML embodies no theory about the world.

So far, this review has considered the original conception of AI, an operational definition of AI, some key examples of AI embodied in artefacts, and some important AI techniques. Together, these provide a basis for consideration of AI's impacts.

My primary concern is with downsides. After all, there's no shortage of wide-eyed optimism about what AI might do for humankind. Many claims are nothing more than vague marketing-speak. The more credible claims, however, relate to:

In return for the promise of AI, it embodies threats. There have been many expressions of serious concern about AI, including by theoretical physicist Stephen Hawking (Cellan-Jones 2014), Microsoft billionaire Bill Gates (Mack 2015), and technology entrepreneur Elon Musk (Sulleyman 2017). However, authors and speakers alike seldom make clear quite what they mean by AI, and their concerns tend to be long lists with limited structure; so conversations can be very confusing. Some useful sources include Dreyfus 1972, Weizenbaum 1976, Scherer (2016, esp. pp. 362-373), Yampolskiy & Spellchecker (2016), Mueller (2016) and Duursma (2018).

The approach I take to assessing the impacts and implications of AI associates its downsides with 5 key features (Clarke 2019a).

The first of these is artefact autonomy. We're prepared to delegate to cars and aircraft straightforward, technical decisions about fuel mix and gentle adjustments of flight attitude. We are, and we should be, much more careful about granting delegations to artefacts in relation to decisions that:

Serious problems arise when authority is delegated to an artefact that lacks the capability to draw inferences, make decisions and take actions that are reliable, fair, or 'right', according to some stakeholder's value-system.

It is necessary to identify 7 levels of autonomy, 3 of which are of the nature of decision systems, and 3 of which are decision support systems. At levels 1-3, the human is in charge, although an artefact might unduly or inappropriately influence the human's decision. At levels 4-5, on the other hand, the artefact is primary, with the human having a window of opportunity to influence the outcomes. At the final level, it is not possible for the human to exercise control over the act performed by the artefact.

The second area of concern is about inappropriate assumptions about data. There are many obvious data quality factors, including accuracy, precision, timeliness, completeness, the general relevance of each data-item, and the specific relevance of the particular content of each data-item. Another consideration that is all too easy to overlook is the correspondence of the data with the real-world phenomena that the process assumes it to represent. That depends on appropriate identity association, attribute association and attribute signification.

It is common in data analytics generally, and in AI-based data analytics, to draw data from multiple sources. That brings in additional factors that can undermine the appropriateness of inferences arising from the analysis and create serious risks for people affected by the resulting decisions. Inconsistent definitions and quality-levels among the data-sources are seldom considered (Widom 1995). Data scrubbing (or 'cleansing') may be applied; but this is a dark art, and most techniques generate errors in the process of correcting other errors (Mueller & Freytag 2003). Claims are made that, with sufficiently large volumes of data, the impacts of low-quality data, matching errors, and low scrubbing-quality automatically smooth themselves out. This may be a justifiable claim in specific circumstances, but in most cases it is a magical incantation that does not hold up under cross-examination (boyd & Crawford 2012).

Also far too common are inappropriate assumptions about the inferencing process that is applied to data. Each approach has its own characteristics, and is applicable to some contexts but not others. Despite that, training courses and documentation seldom communicate much information about the dangers inherent in mis-application of each particular technique.

One issue is that data analytics practitioners frequently assume quite blindly that the data that they have available is suitable for the particular inferencing process they choose to apply. Data on nominal, ordinal and even cardinal scales is not suitable for the more powerful tools, because they require data on ratio scales. 'Assuming that ordinal data (most/more/middle/less/least) is ratio-scale data is not okay!'. Mixed-mode data is particularly challenging. And the means used to deal with missing values is alone sufficient to deliver spurious results; yet the choice is often implicit rather than a rational decision based on a risk assessment. For any significant decision, assurance is needed of the data's suitability for use with each particular inferencing process.

The fourth issue is the opaqueness of inferencing processes. Some techniques used in AI are largely empirical, rather than being scientifically-based. Because the AI/ML method of artificial neural networks is not algorithmic, no procedural or rule-based explanation can be provided for the rationale underlying an inference. Inferences from artificial neural networks are a-rational, i.e. not supportable by a logical explanation.

Courts, coroners and ombudsmen demand explanations for the actions taken by people and organisations. Unless a decision-process is transparent, the analysis cannot be replicated, the process cannot be subjected to audit by a third party, the errors in the design cannot be even discovered let alone corrected, and guilty parties can escape accountability for harm they cause. The legal principles of natural justice and procedural fairness are crucial to civil behaviour. And they are under serious threat from some forms of AI.

The dilution of accountability is closely associated with the fifth major issue with AI, which is irresponsibility. The AI supply-chain runs from laboratory experiment, via IR&D, to artefacts that embody the technology, to systems that incorporate the artefacts, and on to applications of those systems, and deployment in the field. Successively, researchers, inventors, innovators, purveyors, and users bear moral responsibility for disbenefits arising from AI. But the laws of many countries do not impose legal responsibility. The emergent AI regulatory regime in Europe actually absolves some of the players from incurring liability for their part in harmful technological innovation (Clarke 2021).

These five generic threats together mean that AI that may be effective in controlled environments (such as factories, warehouses, and thinly human-populated mining sites) faces far greater challenges in unstructured contexts with high variability and unpredictability (e.g. public roads, households, and human care applications). In the case of human-controlled aircraft, laws impose responsibilities for collision-detection capability, for collision-avoidance functionality, and for training in the rational thought-processes to be applied when the integrity of location information, vision, communications, power, fuel or the aircraft itself is compromised. On the other hand, devising and implementing such capabilities in mostly-autonomous drones is very challenging.

To get to grips with what AI means for people, organisations, economies and societies, those generic threats have to be considered in a wide variety of different contexts.

Many people have reacted in a paranoid manner, giving graphic descriptions, and generating compelling images, of notions such as a 'robot apocalypse', 'attack drones' and 'cyborgs with runaway enhancements'. In sci-fi novels and films, sceptical and downright dystopian treatment of AI-related ideas has a long and distinguished history. Some of the key ideas can be traced through these examples:

These sources are rich and interesting in the ideas they offer (and at least to some extent prescient). However, what we really need is categories and examples grounded in real-world experience, and carefully projected into plausible futures using scenario analysis. To the extent that AI delivers on its promise, the following are natural outcomes:

To the extent that these threads continue to develop, they are likely to mutually reinforce, resulting in the removal of self-determination and meaningfulness from at least some people's lives.

This discussion has had its focus on impacts on individuals, societies and the economy. Organisations are involved in the processes whereby the impacts arise. They are also themselves affected, both directly and indirectly. AI-derived decisions and actions that prove to have been unwise will undermine the reputations of and trust in organisations that have deployed AI. That will have inevitably negative impacts on adoption, deployment success and return on investment.

The value of artefacts has been noted in 'dull, dirty and dangerous work', and where they are demonstrably more effective than humans, particularly where computational reliability and speed of inference, decision and action are vital. In such circumstances, the human race has a century of experience in automation, and careful design, testing and management of AI components within such decision systems will doubtless deliver some benefits.

This requires impact assessment, or preferably multi-stakeholder risk assessment (Clarke 2022). Contexts in which it is particularly crucial that decision-making be reserved for humans include those that involve:

In all such circumstances, AI may assist in the design of decision support systems - but with the important proviso that AI that is essentially empirically-based, and incapable of providing explanations of an underlying rationale, must be handled very sceptically and carefully, and subject to safeguards, mitigation measures, and controls to ensure the safeguards and mitigation measures are functioning as intended.

Any potentially very harmful technology needs to be subjected to controls. The so-called 'precautionary principle' is applied in environmental law, and it needs to be considered for all forms of AI and autonomous artefact behaviour. At the very least, there is a need for Principles for Responsible AI to be not merely talked about, but imposed (Clarke 2019b, 2019c). Beyond that, particularly dangerous techniques and applications need to be subject to moratoria, which need to remain until an adequate regulatory regime is in place.

In this presentation, I've used the past as a springboard for understanding the present. As leaders, your focus is on using the present as a springboard for inventing the future.

To help with that, here are a few ideas on how we can approach the ideas of AI, smart artefacts and autonomous artefacts, so as to achieve positive outcomes and prevent, control and mitigate harmful impacts.

I reason this way:

So I believe that the focus on 'artificial intelligence' has wasted 70 years on a dumb idea.

We don't want 'artificial'; we want real.

We don't want more 'intelligence' of a human kind; we want artefacts to contribute to what we do, both mechanically and intellectually.

Useful intelligence in an artefact, rather than being like human intelligence, needs to be not like it, and instead different from it.

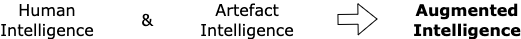

A more appropriate meaning for 'AI' would be 'Artefact Intelligence' (Clarke 2019a, pp.429-431), which:

Our focus should be on devising Artefact Intelligence so that it combines with Human Intelligence to deliver something superior to either of them. So an even more appropriate meaning for AI would be 'Augmented Intelligence', denoting 'Humans+':

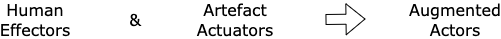

And we can pursue this further, beyond inferences and decisions, to action. Humans act through effectors like arms and fingers. Artefacts, in order to act in the world, are designed to include actuators. The Intellectual plus the Physical add up to Real-World Capability for Action.

Better yet, by devising artefacts to complement humans, we can achieve a combined Capability to do things superior to what either can achieve alone.

That provides us with a comprehensive notion that offers greater value. The final accumulation of ideas sees us devising Artefact Capability to be complementary to Human Capability, in order to deliver what I suggest is the real target-zone: Augmented Capability.

Your workshops require you to explore a recent or emerging smart technology, and Professor Mariano asked me to put a challenge in front of you. My challenge to you is:

Compare the smart technology you are exploring not against

the outdated and unhelpful concept of 'Artificial Intelligence',

but rather against the suite of ideas that I've put before you.

In particular:

In this presentation, I've provided an interpretation of the past of AI together with related ideas about artefact autonomy, in order to assist in understanding AI of the present.

I've suggested that the future lies in a different conception of 'AI', and preferably a shift in focus to a more comprehensive conception, 'AC'. This involves combining human intelligence and capability with complementary artefact intelligence and capability, to produce 'Augmented Intelligence' and 'Augmented Capability'.

The delivery of future AI and AC is no longer in the hands of the pioneer researchers and innovators. My generation is fast running out of time to correct the errors of the last 70 years. We're passing the baton to you.

Digital and intellectual technologies offer the human race enormous possibilities. Please find ways to apply those technologies, while managing the potentially enormous threats they harbour for individuals, economies and societies.

My thanks to Professor Mariano for the very interesting challenge that he set me when he invited me to address this topic, and my best wishes to you as you take up the challenge that I've set you.

The way in which the final section is presented is my own work. However, there are of course precursors for all of the ideas that it draws together. 'Artefact intelligence', for example, was defined in (Takeda et al. 2002, pp.1, 2) as "intelligence that maximizes functionality of the artifact", with the challenge being "to establish intentional/physical relationship between humans and artifacts"; and 'artefactual intelligence' was described by De Leon (2003) as "a measure of fit among instruments, persons, and procedures taken together as an operational system".

The notion of 'augmented intelligence' has a particularly long and to some extent cumulative history. Ashby (1956) worked on the notion of 'intelligence amplification' and Engelbart (1962) on 'augmenting human intellect'. In recent times, Zheng et al. (2017), for example, has used the cumbersome term 'Human-in-the-loop hybrid-augmented intelligence', but provides some articulation of the process, and an article in the business trade press has depicted 'augmented intelligence' as "an alternative conceptualization of AI that focuses on its assistive role in advancing human capabilities" (Araya 2019).

A paper by a division of the professional society IEEE (IEEE-DR 2019) referred to 'augmented intelligence' as using machine learning and predictive analytics not to replace human intelligence but to enhance it. Computers are regarded as tools for mind extension in much the same way as tools are extensions of the body (or, as I prefer to express it, extensions of human capability).

However, in describing the human-computer relationship using the biological term 'symbiotic', the paper effectively treats artefacts as equals with humans. Moreover, the IEEE paper expressly adopts the transhumanism and posthumanism notions, in which it is postulated that a transition will occur to a new species driven by technology rather than genetics. These sci-fi-originated and essentially mystical ideas deflect attention away from the key issue, which is to move beyond what we now recognise as a naive idea of artificial intelligence, and focus on artefacts as tools to support the human mind as well as the human body.

Albus J.S. (1991) 'Outline for a theory of intelligence' IEEE Trans Syst, Man Cybern 21, 3 (1991) 473-509, at http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.410.9719&rep=rep1&type= pdf

Araya D. (2019) '3 Things You Need To Know About Augmented Intelligence' Forbes Magazine, 22 January 2019, at https://www.forbes.com/sites/danielaraya/2019/01/22/3-things-you-need-to-know-about-augmented-intelligence/?sh=5ee58aa93fdc

Ashby R. (1956) 'Design for an Intelligence-Amplifier' in Shannon C.E. & McCarthy J. (eds.) 'Automata Studies' Princeton University Press, 1956, pp. 215-234

Asimov I. (1968) 'I, Robot' (a collection of short stories originally published between 1940 and 1950), Grafton Books, London, 1968

Asimov I. (1985) 'Robots and Empire' Grafton Books, London, 1985

boyd D. & Crawford K. (2011) `Six Provocations for Big Data' Proc. Symposium on the Dynamics of the Internet and Society, September 2011, at http://ssrn.com/abstract=1926431

Brunner J. (1975) 'The Shockwave Rider' Harper & Row, 1975

Capek K. (1923) 'R.U.R (Rossum's Universal Robots)' Doubleday Page and Company, 1923 (orig. published in Czech, 1918, 1921)

Cellan-Jones R. `Stephen Hawking warns artificial intelligence could end mankind' BBC News, 2 December 2014, at http://www.bbc.com/news/technology-30290540

Clarke R. (1991) 'A Contingency Approach to the Application Software Generations' Database 22, 3 (Summer 1991) 23-34, PrePrint at http://www.rogerclarke.com/SOS/SwareGenns.html

Clarke R. (2019a) 'Why the World Wants Controls over Artificial Intelligence' Computer Law & Security Review 35, 4 (Jul-Aug 2019) 423-433, at https://doi.org/10.1016/j.clsr.2019.04.006, PrePrint at http://rogerclarke.com/EC/AII.html

Clarke R. (2019b) 'Principles and Business Processes for Responsible AI' Computer Law & Security Review 35, 4 (Jul-Aug 2019) 410-422, at https://doi.org/10.1016/j.clsr.2019.04.007, PrePrint at http://rogerclarke.com/EC/AIP.html

Clarke R. (2019c) 'Regulatory Alternatives for AI' Computer Law & Security Review 35, 4 (Jul-Aug 2019) 398-409, at https://doi.org/10.1016/j.clsr.2019.04.008, PrePrint at http://rogerclarke.com/EC/AIR.html

Clarke R. (2021) 'Would the European Commission's Proposed Artificial Intelligence Act Deliver the Necessary Protections?' Working paper, Xamax Consultancy Pty Ltd, August 2021, at http://www.rogerclarke.com/DV/EC21.html

Clarke R. (2022) 'Evaluating the Impact of Digital Interventions into Social Systems: How to Balance Stakeholder Interests' Working Paper for ISDF, 1 June 2022, at http://rogerclarke.com/DV/MSRA-VIE.html

De Leon D. (2003) 'Artefactual Intelligence: The Development and Use of Cognitively Congenial Artefacts' Lund University Press, 2003

Dick P.K. (1968) 'Do Androids Dream of Electric Sheep?' Doubleday, 1968

Dreyfus H.L. (1972) 'What Computers Can't Do' MIT Press, 1972; Revised edition as 'What Computers Still Can't Do', 1992

Duursma (2018) 'The Risks of Artificial Intelligence' Studio OverMorgen, May 2018, at https://www.jarnoduursma.nl/the-risks-of-artificial-intelligence/

Engelbart D.C. (1962) 'Augmenting Human Intellect: A Conceptual Framework' SRI Summary Report AFOSR-3223, Stanford Research Institute, October 1962, at https://dougengelbart.org/pubs/augment-3906.html

Forster E. M. (1909) 'The Machine Stops' Oxford and Cambridge Review, November 1909, at https://www.cs.ucdavis.edu/~koehl/Teaching/ECS188/PDF_files/Machine_stops.pdf

Gibson W. (1984) 'Neuromancer' Ace, 1984

IEEE-DR (2019) 'Symbiotic Autonomous Systems: White Paper III' IEEE Digital Reality, November 2019, at https://digitalreality.ieee.org/images/files/pdf/1SAS_WP3_Nov2019.pdf

Kurzweil R. (2005) 'The singularity is near' Viking Books, 2005

McCarthy J. (2007) 'What is artificial intelligence?' Department of Computer Science, Stanford University, 2007, at http://www-formal.stanford.edu/jmc/whatisai/node1.html

McCarthy J., Minsky M.L., Rochester N. & Shannon C.E. (1955) 'A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence' Reprinted in AI Magazine 27, 4 (2006), at https://www.aaai.org/ojs/index.php/aimagazine/article/viewFile/1904/1802

Mack E. (2015) 'Bill Gates says you should worry about artificial intelligence' Forbes Magazine, 28 January 2015, at https://www.forbes.com/sites/ericmack/2015/01/28/bill-gates-also-worries-artificial-intelligence-is-a-threat/

Mueller V.C. (ed.) (2016) 'Risks of general intelligence' CRC Press, 2016

Pollack A. (1992) ''Fifth Generation' Became Japan's Lost Generation' The New York Times, 5 June 1992, at https://www.nytimes.com/1992/06/05/business/fifth-generation-became-japan-s-lost-generation.html

Russell S.J. & Norvig P. (2003) 'Artificial intelligence: a modern approach' 2nd edition, Prentice Hall, 2003

Scherer M.U. (2016) 'Regulating Artificial Intelligence Systems: Risks, Challenges, Competencies, and Strategies' Harvard Journal of Law & Technology 29, 2 (Spring 2016) 354-400

Simon H.A. (1960) 'The shape of automation' Reprinted in various forms, 1960, 1965, quoted in Weizenbaum J. (1976), pp. 244-245

Simon H. A. (1996) 'The sciences of the artificial' 3rd ed., MIT Press, 1996

Stephenson N. (1995) 'The Diamond Age' Bantam Books, 1995

Sulleyman A. (2017) 'Elon Musk: AI is a "fundamental existential risk for human-civilisation" and creators must slow down' The Independent, 17 July 2017, at https://www.independent.co.uk/life-style/gadgets-and-tech/news/elon-musk-ai-human-civilisation-existential-risk-artificial-intelligence-creator-slow-down-tesla-a7845491.html

Takeda H., Terada K. & Kawamura T. (2002) 'Artifact intelligence: yet another approach for intelligent robots' Proc. 11th IEEE Int'l Wksp on Robot and Human Interactive Communication, September 2002, at http://www-kasm.nii.ac.jp/papers/takeda/02/roman2002

Weizenbaum J. (1966) 'ELIZA--a computer program for the study of natural language communication between man and machine' Commun. ACM 9, 1 (Jan 1966) 36-45, at https://dl.acm.org/doi/pdf/10.1145/365153.365168

Weizenbaum J. (1976) 'Computer power and human reason' W.H. Freeman & Co., 1976

Yampolskiy R.V. & Spellchecker M.S. (2016) 'Artificial Intelligence Safety and Cybersecurity: a Timeline of AI Failures' arXiv, 2016, at https://arxiv.org/pdf/1610.07997

Zheng N., Liu Z., Ren P., Ma Y., Chen S., Yu S., Xue J., Chen B. & Wang F. (2017) 'Hybrid-augmented intelligence: collaboration and cognition' Frontiers of Information Technology & Electronic Engineering 18, 1 (2017) 153-179, at https://link.springer.com/article/10.1631/FITEE.1700053

Roger Clarke is Principal of Xamax Consultancy Pty Ltd, Canberra. He is also a Visiting Professor associated with the Allens Hub for Technology, Law and Innovation in UNSW Law, and a Visiting Professor in the Research School of Computer Science at the Australian National University.

| Personalia |

Photographs Presentations Videos |

Access Statistics |

|

The content and infrastructure for these community service pages are provided by Roger Clarke through his consultancy company, Xamax. From the site's beginnings in August 1994 until February 2009, the infrastructure was provided by the Australian National University. During that time, the site accumulated close to 30 million hits. It passed 65 million in early 2021. Sponsored by the Gallery, Bunhybee Grasslands, the extended Clarke Family, Knights of the Spatchcock and their drummer |

Xamax Consultancy Pty Ltd ACN: 002 360 456 78 Sidaway St, Chapman ACT 2611 AUSTRALIA Tel: +61 2 6288 6916 |

Created: 20 March 2022 - Last Amended: 6 June 2022 by Roger Clarke - Site Last Verified: 15 February 2009

This document is at www.rogerclarke.com/EC/AIYV.html

Mail to Webmaster - © Xamax Consultancy Pty Ltd, 1995-2022 - Privacy Policy